ChatGPT Guide: How to Use It Effectively (Prompts, Workflows & Tips)

Mastering ChatGPT: A Guide to Unlocking Its Full Potential in the Age of AI. In a world increasingly shaped by artificial intelligence, ChatGPT has emerged not just as a tool, but as a phenomenon. From drafting emails to generating complex code, its capabilities have captivated millions, hinting at a profound shift in how we interact with information and automate tasks. Yet, for many, the true power of ChatGPT remains untapped, limited to basic queries or simple requests. This guide from Future Insights is designed to elevate your understanding and interaction with this groundbreaking AI, transforming you from a casual user into a proficient prompt engineer. We’ll delve beyond the surface, exploring the nuances of effective communication with AI, revealing strategies to unlock its full potential, and preparing you for a future where human-AI collaboration is not just common, but essential.

- Prompt quality determines output quality — specificity, context, and constraints are everything.

- Iterative refinement (follow-up prompts) outperforms trying to craft the perfect single prompt.

- GPT-4o supports 128k token context and multimodal inputs (text + images).

- Never input sensitive PII or confidential company data into the free ChatGPT interface.

- Use the OpenAI API + LangChain for production workflows requiring consistent, scalable AI outputs.

Beyond the Hype: Understanding ChatGPT’s Core Capabilities and Limitations

Before we can effectively wield ChatGPT, it’s crucial to understand what it is and, equally important, what it isn’t. Dispelling common misconceptions is the first step toward harnessing its true power.

What ChatGPT Is (and Isn’t)

At its heart, ChatGPT is a Large Language Model (LLM) developed by OpenAI. It’s a type of generative AI built upon a neural network architecture known as a “transformer.” This architecture allows it to process and generate human-like text by identifying patterns and relationships in the vast datasets it was trained on—comprising billions of text passages from books, articles, websites, and more. When you pose a question or give it a command, ChatGPT doesn’t “think” in the human sense; instead, it predicts the most statistically probable sequence of words to form a coherent and contextually relevant response.

It is:

- A sophisticated text predictor.

- A powerful pattern recognition engine for language.

- A tool for generating creative text, summaries, code, and more.

- A conversational interface capable of maintaining context over multiple turns.

It is not:

- A sentient being with consciousness or understanding.

- A real-time search engine (though some versions/plugins can access web data).

- An infallible source of truth; it can “hallucinate” or generate incorrect information.

- A substitute for critical human judgment and expertise.

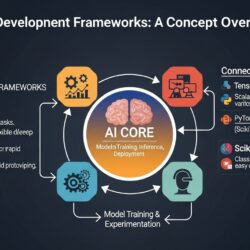

Understanding ChatGPT’s model landscape helps you choose the right tool: GPT-4o (OpenAI’s current flagship) supports 128k token context windows and multimodal inputs (text + images), while GPT-3.5 Turbo powers the free tier with faster responses and lower cost. For developers, the OpenAI API combined with frameworks like LangChain or LlamaIndex enables production-grade AI applications with retrieval-augmented generation (RAG), memory management, and agent orchestration. Third-party tools like Microsoft Copilot (built on GPT-4), Zapier’s AI workflows, and browser extensions extend ChatGPT’s reach into everyday tools. On security: GPT-4o costs approximately $5/$15 per million tokens (input/output), the default data retention is 30 days (opt-out available in Settings), and users should never input PII, financial data, or confidential company information into consumer-facing ChatGPT — consider OpenAI’s enterprise tier or private deployments for sensitive use cases.

The Power of Prediction

The magic of ChatGPT lies in its ability to predict the next token (a word or part of a word) in a sequence, based on the input it receives and the patterns it learned during training. This predictive power allows it to generate text that is remarkably coherent, contextually appropriate, and often indistinguishable from human-written content. When you ask it to write an email, it’s not recalling a specific email from its training data; it’s generating a new one, word by word, based on the statistical likelihood of how an email on that topic, with that tone, and for that recipient, would typically unfold.

Key Use Cases

ChatGPT’s versatility makes it applicable across a myriad of tasks. Fundamentally, any task that involves generating, summarizing, transforming, or understanding text can potentially benefit from its capabilities. This includes, but is not limited to:

- Brainstorming: Generating ideas for content, projects, or problem-solving.

- Drafting: Creating initial versions of emails, reports, articles, or creative works.

- Summarizing: Condensing long documents, articles, or meeting transcripts.

- Coding Assistance: Generating code snippets, debugging, or explaining programming concepts.

- Learning & Education: Explaining complex topics, creating study guides, or practicing language skills.

- Creative Writing: Developing story outlines, character descriptions, poetry, or dialogue.

Understanding these core capabilities forms the bedrock of effective interaction. The goal is to leverage its predictive power by providing it with the right context and direction.

The Art of Prompt Engineering: Crafting Inputs for Superior Outputs

5 Copy-Paste Prompt Templates That Work

Template 1 — Blog Post Draft:

“Act as a senior content strategist. Write a [word count]-word blog post for [target audience] on ‘[keyword]’. Tone: [tone]. Structure: intro + 5 H2 sections + FAQ + conclusion. Include [specific angle]. Avoid generic advice.”

Template 2 — Cold Email:

“Act as an experienced SDR. Write a cold email to [job title] at [company type] offering [product/service]. Pain point: [pain]. CTA: [action]. Under 120 words, conversational, no buzzwords.”

Template 3 — Code Review:

“Review this [language] code for bugs, performance issues, and security vulnerabilities. Explain each issue and suggest a fix. Code: [paste code]”

Template 4 — Research Summary:

“Summarize the following in 5 bullet points. Focus on: key findings, practical implications, and caveats. Text: [paste text]”

Template 5 — Chain-of-Thought:

“Think step by step. [Complex problem]. First, list key variables. Then reason through each option. Finally, recommend the best course of action with justification.”

The quality of ChatGPT’s output is directly proportional to the quality of your input. This principle gives rise to the discipline of “prompt engineering”—the art and science of crafting effective prompts to guide an AI model toward desired results. It’s less about finding a magic phrase and more about learning a structured approach to communication.

Clarity and Specificity: The Golden Rule

Vague prompts lead to vague responses. The more precise and unambiguous you are with your instructions, the better ChatGPT can understand and fulfill your request. Avoid open-ended questions that lack direction. Instead, break down your request into clear components.

Poor Prompt: “Write about AI.”

Better Prompt: “Write a 500-word blog post for a tech-savvy audience about the ethical implications of generative AI, focusing on data bias and job displacement. Use an authoritative yet accessible tone.”

Providing Context and Constraints

ChatGPT operates best when it understands the ‘who,’ ‘what,’ ‘where,’ ‘when,’ ‘why,’ and ‘how’ of your request. Think of yourself as a director giving instructions to a very capable actor.

- Define the Role/Persona: “Act as a senior marketing strategist…” or “You are a friendly customer service agent…”

- Specify the Audience: “Write for a general audience with no technical background…” or “Explain this concept to a 10-year-old.”

- Set the Tone: “Use a formal and professional tone,” “Be enthusiastic and encouraging,” “Adopt a critical and analytical stance.”

- Indicate the Format: “Generate a bulleted list,” “Write a 3-paragraph email,” “Produce a JSON object.”

- Specify Length: “Keep it under 200 words,” “Provide at least five distinct ideas.”

- Include Examples (Few-shot Prompting): For complex or nuanced tasks, providing one or more examples of the desired input-output pair can significantly improve results. E.g., “Here’s an example of how I want you to summarize a product review: [Example Review] -> [Example Summary]. Now summarize this review: [New Review].”

- Define “Forbidden” Elements: “Do not mention specific brand names,” “Avoid technical jargon.”

Iterative Refinement: The Conversation as a Tool

ChatGPT is a conversational AI, meaning it remembers previous turns in a conversation (within a session). This is a powerful feature for refining outputs. Don’t expect perfection from the first prompt. Instead, view your interaction as an ongoing dialogue.

- Ask Follow-up Questions: “Can you elaborate on point 3?” “What are some counter-arguments?”

- Request Revisions: “Make it sound more urgent,” “Shorten the second paragraph,” “Change the call to action.”

- Guide It Step-by-Step (Chain-of-Thought Prompting): For complex tasks, ask ChatGPT to break down its reasoning or approach before providing the final answer. E.g., “Think step-by-step how you would approach this problem, then provide the solution.” This often leads to more accurate and robust outputs.

This iterative process is key to moving from a good response to an excellent one.

Advanced Prompting Techniques

Beyond the basics, several techniques can unlock even greater capabilities:

- Role-Playing: Assigning a specific persona to ChatGPT significantly influences its output. For instance, “Act as a seasoned venture capitalist evaluating a startup pitch. What are your key concerns and questions for a company in the sustainable energy sector?”

- Delimiter Usage: For prompts containing multiple pieces of information or instructions, use clear delimiters (like triple quotes “”” or XML tags ) to separate sections. This helps the AI parse the input more effectively. E.g., “Summarize the following text, which is delimited by triple quotes: “””[Long Text Here]”””.”

- Conditional Instructions: Provide instructions that depend on certain conditions. E.g., “If the user asks for a recommendation, suggest a book on AI ethics. Otherwise, ask them about their favorite genre.”

- Output Formatting: Explicitly ask for specific output formats beyond plain text, such as Markdown, HTML.

“`html

ChatGPT for Production: RAG, LangChain, and Enterprise Workflows

While the consumer-facing ChatGPT interface is powerful for ad-hoc tasks, integrating large language models into production systems requires a more sophisticated approach. This often involves combining ChatGPT with your proprietary data, managing API interactions, and building robust, scalable applications.

Retrieval-Augmented Generation (RAG)

One of the most critical patterns for production LLM systems is Retrieval-Augmented Generation (RAG). RAG allows ChatGPT to answer questions based on your specific, up-to-date, or proprietary documents, going beyond its initial training data. Here’s how it works:

- Your documents (PDFs, internal wikis, databases, etc.) are processed and split into smaller “chunks.”

- These chunks are converted into numerical representations called “embeddings” using an embedding model (e.g., OpenAI’s

text-embedding-3-small). - The embeddings are stored in a specialized database called a vector database (such as Pinecone, Weaviate, Chroma, or Qdrant).

- When a user asks a question, the question itself is also converted into an embedding.

- The system searches the vector database for document chunks whose embeddings are most similar to the question’s embedding.

- These relevant document chunks are then retrieved and provided as context to ChatGPT, alongside the original user query.

- ChatGPT uses this augmented context to generate a precise and informed answer, grounded in your data.

For production RAG systems, chunking documents into 512-token overlapping segments and using OpenAI’s text-embedding-3-small model achieves a good balance of accuracy and cost.

Building with LangChain and LlamaIndex

Frameworks like LangChain and LlamaIndex have emerged to simplify the development of LLM-powered applications. LangChain provides a structured way to build complex workflows:

- Chains: Sequences of calls to LLMs or other utilities (e.g., a chain that summarizes text, then translates it).

- Agents: LLMs that can use “tools” to interact with the outside world (e.g., search engines, APIs, databases) to accomplish tasks.

- Tools: Functions an agent can call, like a calculator, a web search, or a database lookup.

- Memory: Enables agents and chains to remember past interactions, crucial for conversational applications.

Practical Example: Customer Support Bot with LangChain

Imagine a customer support bot. A LangChain agent could be configured with a “product knowledge base lookup” tool (which performs a RAG query on your product documentation) and a “create support ticket” tool (which calls an internal CRM API). When a customer asks about a product feature, the agent uses the RAG tool to find the answer. If the customer expresses frustration, the agent might use the CRM tool to create a ticket, remembering the conversation context via memory.

LlamaIndex, on the other hand, specializes in making it easy to build document Q&A systems using your private data. It offers robust data ingestion, indexing, and querying capabilities specifically tailored for RAG.

Managing Production OpenAI API Calls

When integrating ChatGPT via the OpenAI API into production, several considerations are vital for reliability and efficiency:

- Rate Limiting: OpenAI imposes limits on the number of requests you can make per minute. Implement robust rate limiting logic in your application to prevent exceeding these limits and causing errors.

- Caching: For frequently asked questions or common prompts, cache the LLM’s responses to reduce API calls, improve latency, and save costs.

- Retry Logic: Network issues or temporary API outages can occur. Implement exponential backoff and retry logic for API calls to gracefully handle transient failures.

A production RAG system serving 1,000 queries/day on GPT-4o typically costs $15-50/day depending on context length.

Comparing ChatGPT to Other AI Assistants

While ChatGPT is a dominant player, the AI landscape is rich with powerful alternatives, each with distinct strengths:

- ChatGPT (OpenAI):

- Strengths: Best-in-class ecosystem with extensive plugin support, widest integrations, strong general knowledge, and highly refined user experience. Excellent for a broad range of creative and analytical tasks.

- Models: GPT-4o, GPT-4, GPT-3.5.

- Claude 3.5 Haiku/Sonnet (Anthropic):

- Strengths: Known for longer context windows (up to 200K tokens), superior for complex reasoning, nuanced understanding, and advanced coding tasks. Often preferred for tasks requiring deep analysis of lengthy documents or multi-turn conversations.

- Models: Claude 3.5 Sonnet (general purpose), Claude 3.5 Haiku (fast, cost-effective).

- Gemini 1.5 Pro (Google):

- Strengths: Boasts an impressive 1 million token context window, making it unparalleled for processing and understanding very long documents, entire codebases, or extensive videos. Excellent multimodal capabilities.

- Models: Gemini 1.5 Pro (with 1M token context).

- Mistral Large (Mistral AI):

- Strengths: Offers strong performance with competitive context windows. Notably, Mistral provides open-weight models (e.g., Mixtral 8x7B) that can be self-hosted, offering significant advantages for private deployments, data residency, and fine-tuning for specific enterprise needs.

- Models: Mistral Large (proprietary), Mixtral 8x7B (open-weight).

Security and Compliance for Enterprise ChatGPT Use

For businesses integrating ChatGPT into their operations, security and compliance are paramount. OpenAI offers specific features and agreements tailored for enterprise needs:

- Prompt Injection Attacks: These occur when malicious inputs manipulate the LLM to ignore its original instructions, reveal sensitive information, or generate harmful content. Guard against them by carefully sanitizing user inputs, implementing robust system prompts that reinforce desired behavior, and using techniques like input validation and output filtering.

- Data Residency: For organizations with strict data sovereignty requirements, OpenAI Enterprise offers options for data residency, allowing you to specify whether your data is processed and stored in the US or EU regions. This is crucial for meeting regional regulatory demands.

- Zero Data Retention (ZDR): Available for API usage under specific agreements, ZDR ensures that your prompts and completions are not retained by OpenAI beyond the time necessary to process the request. This is a critical feature for privacy-sensitive applications and industries.

- Audit Logging: The Enterprise tier provides comprehensive activity logs, detailing API calls, user interactions, and model usage. These logs are essential for compliance reviews, security monitoring, and internal governance.

- GDPR Considerations: When using the ChatGPT API, you retain more control over data processing and can establish data processing agreements (DPAs) with OpenAI, which is vital for GDPR compliance. Using the consumer-facing ChatGPT product, however, generally involves less direct control and requires users to adhere to OpenAI’s standard terms, which may not always align with stringent corporate GDPR policies.

“`

This content will be inserted before the FAQ section.

“`

Frequently Asked Questions

What are 5 prompt templates to get better results from ChatGPT?

The most effective ChatGPT prompt templates include: (1) Role + task: ‘Act as a [expert role]. [Task] for [audience].’; (2) Few-shot examples: provide 1–2 input/output pairs before your actual request; (3) Chain-of-thought: ‘Think step by step before answering.’; (4) Format constraint: ‘Respond only in JSON with keys: title, summary, tags.’; (5) Iterative refinement: start with a draft, then follow up with ‘Make it 20% shorter and more direct.’

How do I prevent ChatGPT from hallucinating or making factual errors?

To minimize hallucinations: (1) Ask ChatGPT to say ‘I don’t know’ when uncertain. (2) Use retrieval-augmented generation (RAG) via LangChain or the OpenAI API with your own knowledge base. (3) Enable web browsing in ChatGPT Plus for real-time data. (4) Always fact-check statistics, dates, names, and citations against primary sources. (5) Chain-of-thought prompting improves factual accuracy by 20–30% on complex reasoning tasks.

When should I use ChatGPT vs the API or a fine-tuned model?

Use ChatGPT.com for ad-hoc tasks, brainstorming, and personal productivity. Use the OpenAI API when you need to integrate AI into applications, automate workflows, or process large volumes of text programmatically — tools like LangChain and LlamaIndex make this easy. Use fine-tuned models when you need highly consistent tone/format or domain-specific expertise (e.g., legal document drafting, medical coding) — fine-tuning on 50–500 examples can significantly reduce prompt length and improve accuracy for specialized tasks.

By mastering the techniques outlined in this guide, you can move beyond basic interactions and truly unlock the transformative power of ChatGPT, making it an indispensable asset in your personal and professional endeavors. The future of human-AI collaboration is here, and with these skills, you’re ready to shape it.