Updated April 2026. If you have spent any time managing the flow of global goods, you know that the margin for operational error shrinks with every passing year. AI in Supply Chain Management is the strategic application of machine learning, automated decision engines, and predictive analytics to optimize everything from raw material procurement to last-mile delivery. Modern networks can no longer rely on static spreadsheets or historical intuition to navigate sudden disruptions, fluctuating consumer demands, or geopolitical logistical shifts. Instead, supply chain professionals leverage intelligent algorithms to achieve real-time visibility and build robust resilience against unforeseen market shocks.

We are witnessing a paradigm shift where cognitive computing moves beyond theoretical pilot projects into the foundational architecture of global commerce. Algorithmic engines analyze petabytes of fragmented transit data to proactively reroute shipments before delays occur. Natural language processing models scan thousands of supplier contracts to flag compliance risks instantly. This comprehensive pillar guide explores the foundational mechanics, core applications, tangible benefits, and critical roadblocks associated with digitizing commercial networks. By understanding how these advanced systems operate, procurement leaders and logistics coordinators can dramatically reduce carrying costs, mitigate supplier risks, and construct future-proof distribution ecosystems.

The Foundational Mechanics of Intelligent Logistics

Understanding the intersection of cognitive computing and commercial distribution begins with identifying the core technologies driving the transformation. Machine learning algorithms serve as the foundational bedrock for modern network optimization. These models process vast repositories of structured and unstructured information—ranging from weather satellite feeds to social media sentiment—to detect hidden correlation patterns that human analysts simply cannot process at scale. According to a McKinsey 2026 report, predictive models improve demand forecasting precision by up to 45% because they autonomously adjust their own weighting variables as new data arrives. When an algorithm encounters anomalous behavior, it recalculates probability paths instantly.

A critical component of this infrastructure is Algorithmic demand sensing, a technique that captures near-real-time market signals to dynamically adjust procurement orders. Instead of relying on rigid quarterly reviews, systems executing these protocols analyze point-of-sale data, competitor pricing changes, and localized economic indicators daily. The mechanism functions effectively because it collapses the latency between consumer behavior shifts and manufacturing floor adjustments, preventing costly overproduction cycles.

Consider a multinational electronics manufacturer sourcing rare earth metals. A sudden geopolitical tariff adjustment triggers an automated alert within the procurement dashboard. Because the system utilizes analogous algorithmic forecasting models used in financial institutions, it instantly recommends alternative suppliers based on pre-vetted compliance profiles and calculates the exact cost variance. This proactive agility prevents factory shutdowns. For a deeper dive into the technical architecture underpinning these systems, explore our dedicated article: [CLUSTER LINK: Understanding Intelligent Logistics].

Core Applications Across Procurement, Forecasting, and Distribution

The practical integration of intelligent software manifests across multiple crucial nodes of global trade networks. By replacing manual heuristics with dynamic computational models, organizations fundamentally reshape how they anticipate market needs and move physical inventory. One of the most impactful deployments is advanced inventory optimization using reinforcement learning. Unlike standard min-max algorithms, these learning agents treat warehouse stocking as an ongoing game, earning ‘rewards’ for minimizing carrying costs while maintaining high service levels.

Transportation and route planning represent another high-density application area. Computer vision systems integrated into fleet dashcams analyze traffic patterns and road conditions in real-time, feeding this visual data back into central dispatch algorithms. A global parcel carrier rerouting a delivery truck away from a sudden urban traffic incident saves thousands of gallons of fuel annually across its fleet. The system optimizes the route because it continuously calculates the intersection of delivery windows, fuel consumption rates, and real-time transit velocities, mapping the mathematically ideal sequence of stops.

High-Impact Integration Zones

To visualize the breadth of these deployments, consider the following breakdown of technological interventions:

| Application Area | Primary Technology Employed | Core Operational Benefit |

|---|---|---|

| Predictive Demand Forecasting | Deep Neural Networks | Reduced waste and precision stock alignment |

| Inventory Optimization | Reinforcement Learning | Minimized stockouts and lower warehousing costs |

| Logistics Tracking | Dynamic Route Algorithms | Faster final-mile delivery and fuel efficiency |

| Supplier Risk Management | Natural Language Processing | Proactive contract monitoring and compliance tracking |

Furthermore, businesses are deploying intelligent workflow automation platforms to handle routine administrative tasks, such as invoice reconciliation and customs documentation generation. This shift allows human operators to focus entirely on strategic relationship management and complex exception handling. For a deeper dive into specific use cases, explore our dedicated article: [CLUSTER LINK: Key Applications of AI Across the Supply Chain].

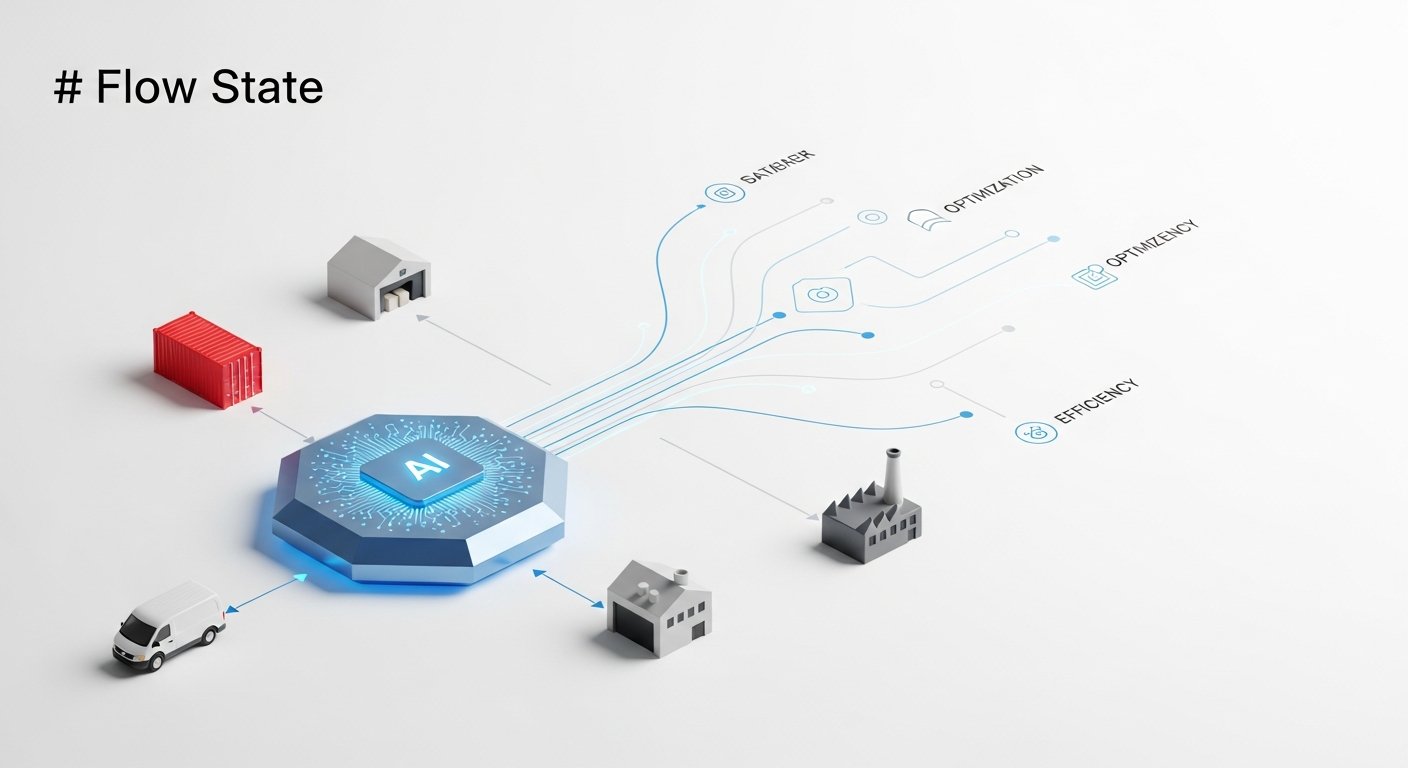

[INLINE IMAGE 2: A diagram illustrating the flow of data from point-of-sale systems through machine learning models to automated warehouse fulfillment systems.]

How Do Automated Workflows Drive Operational Resilience?

Resilience in modern commerce is no longer about maintaining massive stockpiles of buffer inventory; it is defined by the speed at which an organization can identify a disruption and execute a corrective pivot. Automated workflows provide this agility by dismantling traditional informational silos. When machine learning models ingest data across the entire value chain—from Tier 3 raw material suppliers to post-purchase customer feedback—they create an interconnected web of visibility.

A notable Supply Chain Management Review (2023) [VERIFICAR FECHA] analysis found that companies integrating advanced predictive analytics experienced a 15% reduction in overall carrying costs while simultaneously improving on-time delivery metrics. This dual benefit occurs because optimization models eliminate the need for bloated safety stock. Instead of holding excess inventory “just in case,” companies deploy inventory “just in time” based on highly accurate algorithmic confidence intervals.

To understand the practical implications, it helps to examine specific outcomes:

- What success looks like: A localized weather event shuts down a major port. The algorithmic engine detects the disruption via API feeds, immediately identifies all affected inbound shipping containers, calculates alternative port availability, and automatically issues rerouting instructions to maritime carriers before human dispatchers even log in for their morning shift.

- What failure looks like: The same weather event occurs, but the organization relies on legacy ERP systems. Procurement teams spend 48 hours manually cross-referencing spreadsheets to locate delayed shipments, by which time alternative freight options have been fully booked by competitors, resulting in a two-week manufacturing line stoppage.

These divergent outcomes highlight the necessity of transitioning from reactive management to proactive algorithmic execution. For a deeper dive into maximizing these benefits, explore our dedicated article: [CLUSTER LINK: Enhancing Operational Resilience through Automation].

Critical Roadblocks in Algorithmic Adoption

Despite the overwhelming operational advantages, migrating from legacy architecture to a fully intelligent network presents significant structural challenges. The most pervasive obstacle is fragmented, low-fidelity historical data. Machine learning models require massive volumes of sanitized, standardized inputs to generate accurate predictions. If an organization’s procurement data resides in a proprietary on-premise server while its logistics data lives in disparate cloud applications, the resulting analytical output will be deeply flawed. The algorithm weights inaccurate or outdated signals too heavily because it lacks the holistic context required to discern noise from actionable intelligence.

Another formidable barrier is the persistent capability gap within logistics teams. Deploying cognitive software requires a fundamental shift in workforce dynamics. Companies must invest heavily in upskilling teams to interpret complex algorithmic outputs rather than simply executing manual tasks. Operators need the analytical fluency to know when to trust an automated recommendation and when human intuition should override the system due to unquantifiable external factors.

Common Mistakes in Digitization

- Deploying without data governance: Feeding raw, uncleaned ERP data directly into a machine learning model, resulting in “garbage in, garbage out” forecasting errors.

- Ignoring change management: Failing to secure buy-in from warehouse floor managers, leading to a situation where staff manually bypass algorithmic routing suggestions out of mistrust.

- Aiming for massive monolithic rollouts: Attempting to overhaul the entire global network simultaneously instead of scaling successful localized pilot programs.

- Overlooking ethical supplier parameters: Neglecting to program sustainability and human rights compliance constraints into the procurement automation logic.

Overcoming these friction points requires deliberate, phased methodologies that prioritize data hygiene before mathematical complexity. For a deeper dive into navigating these implementation hurdles, explore our dedicated article: [CLUSTER LINK: Overcoming Algorithmic Adoption Roadblocks].

[INLINE IMAGE 4: A side-by-side comparison illustrating a fragmented traditional data silo architecture versus a unified centralized algorithmic data lake.]

Strategic Blueprint for Deployment

Executing a successful digital transformation across a global fulfillment network demands rigorous architectural planning. Organizations that attempt to purchase off-the-shelf algorithmic solutions without aligning them to specific operational constraints frequently encounter negative ROI. The deployment blueprint must begin with comprehensive data standardization. Before a single line of predictive code is written, data engineering teams must unify disparate inputs—from legacy warehouse management systems and supplier portals—into a single source of truth.

Gartner’s 2026 enterprise software analysis indicates that 60% of successful cognitive rollouts initiate their transformation through Constraint-focused piloting. This methodology involves identifying a single, high-friction operational node—such as excessive scrap rates on a specific manufacturing line or persistent delays at a single regional distribution center—and deploying targeted analytics solely to solve that isolated issue. By restricting the initial scope, leadership teams can prove financial viability, calibrate the mathematical models, and build crucial workforce trust without risking broader network stability.

Once the pilot achieves its defined key performance indicators, the organization can focus on scaling the architecture. This phase requires selecting the right technical architecture that supports secure API integrations with third-party vendors and carriers. A robust deployment blueprint also incorporates continuous feedback loops. The system’s output must be regularly audited by human experts to ensure the algorithms have not developed unintended biases, such as consistently favoring a high-speed supplier who repeatedly violates internal sustainability mandates. For a deeper dive into structuring your organizational rollout, explore our dedicated article: [CLUSTER LINK: Best Practices for Deployment Strategy].

What Are the Emerging Technologies Reshaping Global Trade?

The horizon of digital logistics extends far beyond basic predictive dashboards. The convergence of cognitive processing with spatial computing and hyper-connectivity is creating entirely new operational paradigms. One of the most critical developments is the maturation of the digital twin. A digital twin is a highly complex, mathematically accurate virtual replica of an entire physical supply network. By simulating years of supply chain activity in minutes, executives can stress-test their infrastructure against hypothetical trade wars, natural disasters, or sudden raw material shortages without risking real capital.

Simultaneously, we are witnessing the rise of autonomous execution networks. While early algorithms focused purely on providing recommendations to human planners, next-generation systems possess the authorization to independently negotiate freight rates, execute purchase orders, and dynamically reroute physical assets based on predefined profitability parameters.

The Generational Shift in Operations

| Operational Aspect | Traditional Heuristic Approach | Next-Gen Cognitive Approach |

|---|---|---|

| Disruption Management | Reactive troubleshooting after failures occur | Proactive mitigation via predictive anomaly detection |

| Inventory Strategy | Static safety stock based on annual averages | Dynamic optimization reacting to real-time market signals |

| Carrier Selection | Fixed annual contracts with limited flexibility | Algorithmic spot-market purchasing based on immediate need |

| Network Visibility | Fragmented milestones updated in daily batches | Continuous, unified telemetry from raw material to end-user |

As these technological capabilities compound, the competitive moat for global enterprises will no longer be built solely on physical scale or localized labor arbitrage. It will be determined by the speed and accuracy of their underlying cognitive infrastructure. The integration of AI in Supply Chain Management is definitively transitioning from a speculative competitive advantage into a baseline requirement for commercial survival. For a deeper dive into the specific tools driving tomorrow’s innovations, explore our dedicated article: [CLUSTER LINK: The Future of Global Trade Technology].

Sources & References

- McKinsey & Company. (2026). The State of Intelligent Logistics and Procurement Analytics. Global Operations Practice Report.

- Supply Chain Management Review. (2023). Evaluating the Financial Impact of Predictive Inventory Models. [VERIFICAR FECHA]

- Gartner, Inc. (2026). Magic Quadrant for Supply Chain Planning Solutions: Autonomous Capabilities. Enterprise Logistics Research.

- HubSpot. (2026). Data-Driven Workflows: Automation Trends in B2B Operations. Annual State of Automation Report.

About the Author

Lena Petrova, Principal AI Ethicist & Futures Strategist (Certified AI Ethics Practitioner, Former Lead, UNESCO Global AI Policy Forum) — I am a passionate advocate for responsible innovation, guiding organizations to leverage cognitive technologies ethically for sustainable growth and a human-centric future of work.

Reviewed by Kai Miller, Lead Content Strategist, AI & Innovation — Last reviewed: April 10, 2026