What Are AI Development Frameworks?

In the rapidly accelerating landscape of technological innovation, intelligent system development tools serve as the bedrock upon which the future of work is being constructed. These structured platforms and comprehensive toolsets streamline the creation, training, and deployment of artificial intelligence and machine learning models. They abstract away complex mathematical operations and low-level programming, enabling developers and data scientists to focus on model design and problem-solving. These AI development frameworks are pivotal to Future Technology and the Evolving Workplace, acting as the foundational infrastructure that enables businesses to innovate, automate, and adapt, fundamentally reshaping industries and job functions across the globe.

At their core, these robust software architectures provide pre-built components, libraries, and APIs that facilitate every stage of the AI lifecycle. From data ingestion and preprocessing to neural network architecture design, model training, evaluation, and eventual deployment into production environments, they offer a coherent and efficient pathway. Their rise has democratized AI, making advanced machine learning and deep learning techniques accessible to a broader audience, thereby accelerating the pace of digital transformation and fostering novel applications in every sector.

The Essential Components of Modern AI Frameworks

Understanding the internal architecture of leading artificial intelligence SDKs is crucial for appreciating their power and utility. These platforms are far more than simple code libraries; they are integrated ecosystems designed to support the entire machine learning workflow. Consequently, a number of essential components consistently appear across different ML frameworks, each playing a vital role in enabling efficient AI development.

- Data Handling and Preprocessing Tools: Before any model can learn, data must be collected, cleaned, and transformed into a usable format. Frameworks offer extensive utilities for data loading, augmentation (e.g., rotating images, adding noise), scaling, and splitting into training, validation, and test sets. This often includes support for various data formats, from tabular data (CSVs) to images, audio, and text.

- Model Building and Architecture Design: This is arguably the most recognizable component. Deep learning libraries, in particular, provide flexible APIs for defining various neural network architectures, including convolutional neural networks (CNNs), recurrent neural networks (RNNs), transformers, and more traditional machine learning models. They allow for layering different types of neurons, specifying activation functions, and configuring connections.

- Training and Optimization Engines: Once a model is defined, it needs to be trained on data. Frameworks incorporate highly optimized algorithms for this purpose, including various optimizers (e.g., Adam, SGD), loss functions (e.g., cross-entropy, mean squared error), and gradient computation mechanisms. They often leverage hardware accelerators like GPUs and TPUs for parallel processing, significantly speeding up the iterative training process.

- Evaluation and Monitoring Tools: After training, models must be rigorously evaluated to ensure performance and prevent overfitting. Frameworks provide metrics (e.g., accuracy, precision, recall, F1-score) and visualization tools (e.g., TensorBoard for TensorFlow) to monitor training progress, debug models, and assess their effectiveness against unseen data.

- Deployment and Production Capabilities: A model’s value is realized when it can be integrated into real-world applications. Leading machine learning toolkits offer features for saving and loading trained models, converting them to efficient formats for deployment on various devices (edge devices, mobile, web), and serving them via APIs. This ensures that the intelligence developed can be leveraged across enterprise systems.

- Community and Ecosystem Support: While not a direct component of the code, a robust community, extensive documentation, and a thriving ecosystem of third-party libraries and pre-trained models are critical for the long-term viability and practical utility of any AI programming environment. This collective intelligence accelerates innovation and problem-solving.

[INLINE IMAGE 1: diagram illustrating the typical lifecycle of an AI model, showing data preprocessing, model building, training, evaluation, and deployment stages, with framework components overlaid on each stage]

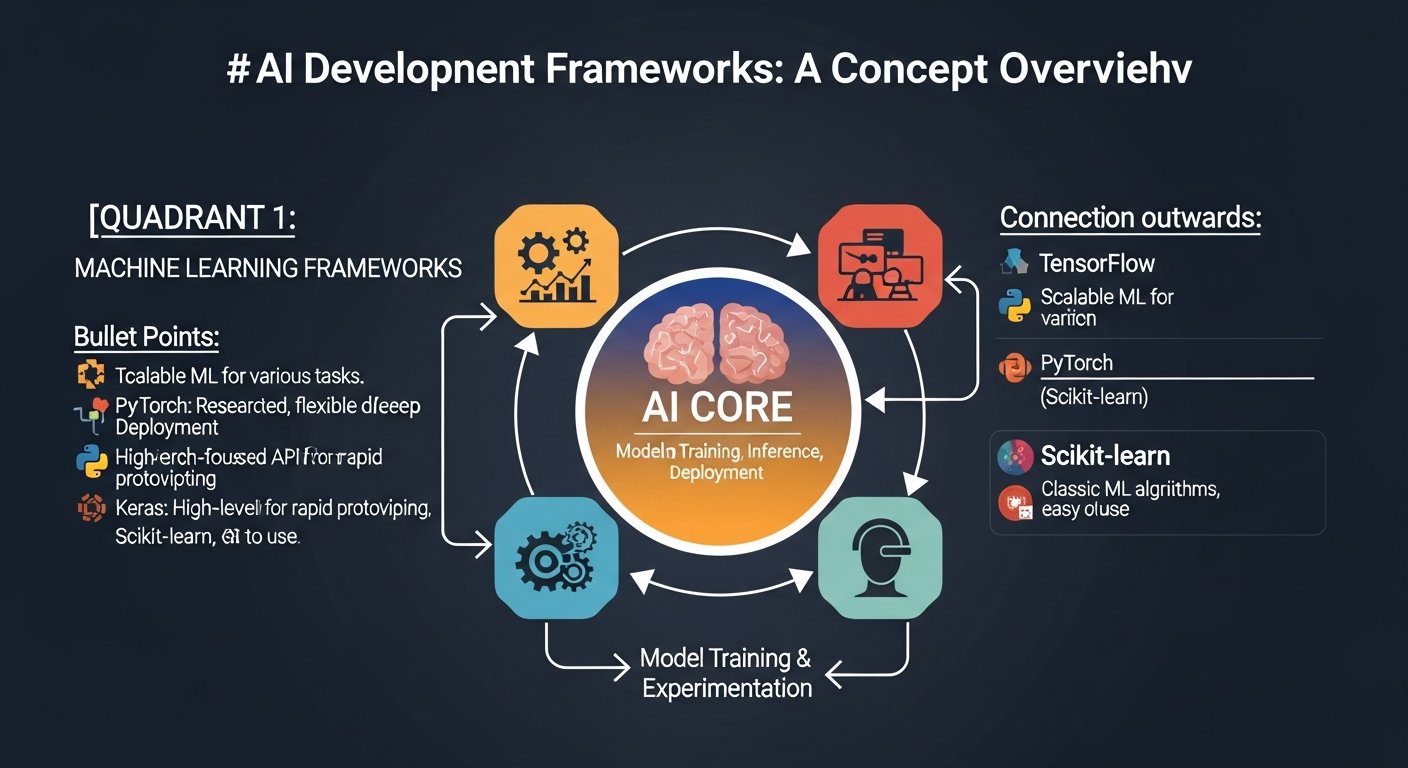

Which AI Development Frameworks Dominate the Industry Today?

The landscape of AI development platforms is rich and diverse, with several key players holding prominent positions. Each offers a unique blend of features, philosophy, and target use cases. Understanding their distinct advantages is crucial for anyone navigating intelligent system development tools, especially as these choices directly influence project outcomes in the modern workplace.

TensorFlow: Scalability and Production Readiness

Developed by Google, TensorFlow stands as one of the most comprehensive and widely adopted deep learning libraries available. Its primary strength lies in its ability to handle large-scale deployments and intricate computational graphs, making it a go-to choice for enterprise-level applications and research requiring significant computational resources. TensorFlow supports distributed computing across CPUs, GPUs, and TPUs, allowing for massive parallelization of model training. Its ecosystem includes tools like TensorFlow Extended (TFX) for production-grade ML pipelines and TensorFlow.js for in-browser machine learning, showcasing its versatility.

- Primary Developer: Google

- Core Focus/Strength: High-performance numerical computation, large-scale deep learning, production deployment.

- Key Features:

- Static and dynamic computational graphs (TensorFlow 2.x with Keras as its high-level API).

- Robust deployment options (TensorFlow Serving, TensorFlow Lite).

- Advanced visualization tools (TensorBoard).

- Support for distributed training.

- Primary Language(s): Python (primary), C++, Java, JavaScript, Go, Swift, R.

- Community & Ecosystem: Very large and active, extensive documentation, numerous pre-trained models.

- Workplace Application/Impact: TensorFlow’s robust deployment capabilities enable enterprises to integrate AI-powered predictive maintenance systems, reducing downtime and optimizing operational efficiency in manufacturing. It’s also extensively used in natural language processing (NLP) for customer support automation and in computer vision for quality control in assembly lines.

PyTorch: Flexibility for Research and Rapid Prototyping

Originating from Meta’s AI Research lab, PyTorch has rapidly gained traction, particularly within the research community and for projects demanding high flexibility. It is celebrated for its Python-centric approach and dynamic computational graph, which allows for on-the-fly debugging and more intuitive model development. This flexibility makes it ideal for experimentation and rapid prototyping of novel deep learning architectures.

- Primary Developer: Meta (Facebook AI Research)

- Core Focus/Strength: Deep learning research, rapid experimentation, flexible API.

- Key Features:

- Dynamic computational graph (eager execution).

- Native Python integration, making it feel very “Pythonic.”

- Strong support for GPU acceleration.

- Rich ecosystem of research-focused libraries (e.g., Hugging Face Transformers).

- Primary Language(s): Python, C++.

- Community & Ecosystem: Active and growing, particularly strong in academia and cutting-edge research.

- Workplace Application/Impact: PyTorch’s flexibility empowers R&D teams to rapidly prototype novel AI solutions for personalized customer experiences, directly impacting marketing and sales strategies. Its ease of use for complex models also accelerates drug discovery processes and empowers scientific simulations in pharmaceutical and biotechnology sectors.

Keras: Simplifying Deep Learning Development

Keras is a high-level neural networks API, typically running on top of TensorFlow, but it can also run on Theano or Microsoft Cognitive Toolkit (CNTK). Its primary objective is to make deep learning more accessible and user-friendly, abstracting away much of the complexity inherent in lower-level deep learning libraries. Keras emphasizes user experience, modularity, and rapid experimentation.

- Primary Developer: François Chollet (now integrated into TensorFlow 2.x)

- Core Focus/Strength: User-friendliness, rapid prototyping, simplified deep learning.

- Key Features:

- Simple, intuitive API for neural network construction.

- Modular and extensible architecture.

- Easy integration with existing Python libraries (NumPy, SciPy).

- Seamlessly integrated into TensorFlow 2.x as its official high-level API.

- Primary Language(s): Python.

- Community & Ecosystem: Very large due to its integration with TensorFlow; extensive educational resources.

- Workplace Application/Impact: Keras democratizes deep learning, enabling a broader range of data scientists and even citizen data scientists to build sophisticated models without needing to be deep learning experts. This accelerates the development of internal AI tools, from automated content tagging to simple image recognition systems, boosting productivity across various departments.

Scikit-learn: The Foundation for Traditional Machine Learning

While TensorFlow and PyTorch dominate deep learning, Scikit-learn remains an indispensable machine learning toolkit for traditional ML algorithms. It provides a wide array of supervised and unsupervised learning algorithms, including classification, regression, clustering, dimensionality reduction, and model selection. Scikit-learn is built upon Python’s scientific computing stack (NumPy, SciPy, Matplotlib) and is renowned for its consistency, ease of use, and robust documentation.

- Primary Developer: Community-driven, part of the scikit-learn project.

- Core Focus/Strength: Traditional machine learning algorithms, data preprocessing, model evaluation.

- Key Features:

- Comprehensive suite of ML algorithms (SVMs, Random Forests, Gradient Boosting, KMeans, etc.).

- Consistent API across all models.

- Tools for data preprocessing, feature selection, and model validation.

- Strong integration with Python’s data science ecosystem.

- Primary Language(s): Python.

- Community & Ecosystem: Mature, very active, excellent documentation, cornerstone of Python data science.

- Workplace Application/Impact: Scikit-learn is foundational for analytics and predictive modeling in business. It’s used for customer churn prediction, fraud detection, credit scoring, and demand forecasting, directly enhancing decision-making processes and optimizing resource allocation across diverse industries like finance, retail, and healthcare.

[INLINE IMAGE 2: bar chart comparing the adoption rates or popularity trends of TensorFlow, PyTorch, Keras, and Scikit-learn over the last few years among developers or researchers]

How AI Development Frameworks Reshape the Evolving Workplace

These advanced intelligent system development tools are not merely technical constructs; they are powerful drivers of organizational change, profoundly impacting every facet of the evolving workplace. From operational efficiency to skill requirements and entirely new business models, their influence is transformative.

- Automation and Efficiency Gains: Machine learning toolkits enable the automation of repetitive, data-intensive tasks across various functions. In customer service, NLP models power intelligent chatbots, freeing human agents for complex queries. In manufacturing, computer vision frameworks facilitate automated quality inspection, improving accuracy and speed. This leads to significant operational efficiency, cost reduction, and improved output quality.

- Reshaping Job Roles and Skill Sets: The proliferation of AI development frameworks is fundamentally altering the demand for specific skill sets. While some routine roles may be automated, there’s a surging demand for AI specialists—machine learning engineers, data scientists, prompt engineers, and AI ethicists. Existing roles are also evolving; marketing professionals might use AI to personalize campaigns, while HR managers leverage predictive analytics for talent acquisition and retention. The focus shifts towards human-AI collaboration, where creativity, critical thinking, and ethical reasoning become paramount.

- Driving Innovation and New Business Models: By lowering the barrier to entry for AI development, these frameworks empower businesses to innovate at an unprecedented pace. Startups can rapidly prototype AI-powered solutions, and established enterprises can explore new product lines, from personalized healthcare to smart urban infrastructure. This fosters entirely new business models centered around AI-as-a-Service or data-driven insights, creating new market opportunities.

- Enhanced Decision-Making: The ability to process vast amounts of data and extract actionable insights through AI frameworks provides businesses with a competitive edge. Predictive analytics helps forecast market trends, optimize supply chains, and inform strategic planning. This data-driven approach replaces intuition with evidence, leading to more informed and effective decisions across all organizational levels.

- Ethical Considerations and Responsible AI: As AI development platforms become more powerful, the ethical implications of their deployment in the workplace become increasingly critical. Frameworks are starting to incorporate tools for explainable AI (XAI) and fairness assessment to address biases in algorithms and ensure transparency. The evolving workplace demands not just efficient AI, but responsible AI, necessitating new roles like AI ethicists and stronger governance frameworks.

How Do You Choose the Right AI Development Framework for Your Project?

Selecting the optimal machine learning toolkit is a critical decision that can significantly influence the success, efficiency, and scalability of an AI project. There’s no one-size-fits-all answer; the best choice depends on a confluence of factors related to the project’s nature, team’s expertise, and long-term goals. Here are key considerations when evaluating AI development platforms:

-

Project Type and Complexity:

- Deep Learning Research & Experimentation: For cutting-edge research, novel architectures, and rapid iteration, frameworks like PyTorch, with its dynamic graph and Pythonic interface, often provide superior flexibility.

- Large-Scale Production Deployment: For robust, scalable, and production-ready applications, especially those requiring deployment across diverse platforms (edge, mobile, web), TensorFlow’s extensive ecosystem (TFX, Lite, JS) makes it a strong contender.

- Traditional Machine Learning & Analytics: For classical algorithms (regression, classification, clustering) and general data analysis, Scikit-learn remains the industry standard due to its comprehensive algorithm set and user-friendly API.

- Simplified Deep Learning: For accessibility and rapid development of common deep learning models, Keras (especially within TensorFlow 2.x) offers an excellent high-level abstraction.

-

Team Expertise and Learning Curve:

- Consider your team’s existing proficiency in Python, C++, and familiarity with specific AI concepts. PyTorch often feels more intuitive for Python developers due to its imperative style. TensorFlow 2.x (with Keras) is also very approachable. Scikit-learn is extremely beginner-friendly.

- A higher learning curve for a complex framework might justify the investment if the project’s scope truly demands its advanced features.

-

Scalability and Performance Requirements:

- Will your model need to train on massive datasets? Will it need to serve millions of requests per second in production? Frameworks optimized for distributed training and efficient inference (like TensorFlow) are crucial for high-performance, enterprise-scale applications.

- Consider hardware acceleration needs (GPUs, TPUs) and how well the framework leverages them.

-

Ecosystem, Community, and Documentation:

- A vibrant community ensures ongoing support, readily available tutorials, and a wealth of pre-trained models and extensions. TensorFlow and PyTorch both boast extensive and active communities.

- Comprehensive and up-to-date documentation is invaluable for troubleshooting and learning.

- Evaluate the availability of relevant third-party libraries (e.g., Hugging Face for NLP, PyTorch Lightning for research).

-

Deployment Environment and Infrastructure:

- Where will the model eventually run? On cloud servers, edge devices, mobile apps, or within a web browser? Different frameworks offer specialized tools for various deployment targets (e.g., TensorFlow Lite, ONNX for cross-framework compatibility).

- Consider integration with existing MLOps pipelines and cloud platforms.

-

Long-Term Viability and Maintenance:

- Evaluate the framework’s maturity, funding, and commitment from its primary developer. Google’s backing for TensorFlow and Meta’s for PyTorch indicate strong long-term support.

- Consider the ease of maintenance, updates, and future compatibility.

Common Mistakes in AI Framework Selection

Even seasoned teams can fall prey to common pitfalls when choosing an intelligent system development tool. Avoiding these can save significant time and resources:

- Chasing the Hype: Opting for a trendy framework without a clear justification based on project needs or team expertise. The newest framework isn’t always the best for every problem.

- Underestimating the Learning Curve: Choosing a complex framework for a team with limited experience, leading to slower development, frustration, and increased errors.

- Ignoring Deployment Needs: Developing a powerful model only to find that the chosen framework makes it difficult or inefficient to deploy to the target environment (e.g., edge devices with limited resources).

- Lack of Scalability Planning: Selecting a framework that performs well for small prototypes but struggles when scaled up to production-grade data volumes or user loads.

- Over-reliance on a Single Tool: Believing one framework can solve all AI problems. Often, a combination of tools (e.g., Scikit-learn for feature engineering, PyTorch for a deep learning component) yields the best results.

- Neglecting Community Support: Choosing a niche or nascent framework with limited documentation or community, leading to isolation when encountering complex issues.

The Future Landscape of AI Frameworks and Workplace Innovation

The evolution of AI development platforms is a continuous journey, mirroring the rapid advancements in artificial intelligence itself. The future promises even more sophisticated, accessible, and ethically aware intelligent system development tools, further cementing their role in shaping the evolving workplace.

- MLOps Integration: Expect deeper integration of MLOps (Machine Learning Operations) tools and practices directly into frameworks. This will streamline the entire lifecycle from experimentation to deployment, monitoring, and retraining, making AI development more robust and production-ready.

- Explainable AI (XAI) and Ethical AI: As AI becomes more pervasive, the demand for transparency and fairness will grow. Future deep learning libraries will likely incorporate more built-in features for XAI (e.g., model interpretability tools) and ethical AI assessment, helping developers identify and mitigate biases, fostering responsible AI in the workplace.

- Automated Machine Learning (AutoML): AutoML, which automates aspects like model selection, hyperparameter tuning, and even neural architecture search, will become more sophisticated and integrated. This will lower the entry barrier even further, allowing more domain experts without deep ML expertise to leverage AI effectively.

- Edge AI and Resource Optimization: The push for AI on edge devices (smartphones, IoT sensors) will drive frameworks to become even more efficient in terms of memory footprint and computational requirements. Specialized AI development platforms for embedded systems will continue to emerge.

- Multi-Modal AI: Future frameworks will increasingly support complex multi-modal data inputs (e.g., combining vision, language, and audio) and outputs, enabling more human-like AI systems capable of understanding and interacting with the world in richer ways. This will unlock new applications in fields like robotics, virtual reality, and advanced human-computer interaction in the workplace.

The journey of these platforms is far from over. As they continue to evolve, they will further empower businesses to harness the potential of AI, driving unprecedented levels of automation, personalization, and strategic insight. This constant innovation ensures that the impact of intelligent system development tools on the future of work will only deepen, making AI a truly ubiquitous force in our professional lives.

| Framework Name | Primary Developer/Origin | Core Focus/Strength | Key Features | Primary Language(s) | Community & Ecosystem | Workplace Application/Impact |

|---|---|---|---|---|---|---|

| TensorFlow | Deep Learning, Production Readiness, Scalability | Distributed training, TensorFlow Serving, TensorBoard, Keras API | Python, C++, Java, JS | Very Large, Active, Enterprise-focused | Enterprise-scale deployment, predictive maintenance, NLP in customer service | |

| PyTorch | Meta (Facebook AI Research) | Deep Learning Research, Flexibility, Rapid Prototyping | Dynamic computational graph, “Pythonic” API, GPU acceleration | Python, C++ | Active, Growing, Research-centric | Rapid R&D, personalized experiences, scientific discovery |

| Keras | François Chollet (now TF 2.x) | User-friendly Deep Learning, Rapid Experimentation | High-level API, modularity, runs on TF/Theano/CNTK | Python | Very Large (via TF), Excellent educational resources | Democratizing DL, internal tool development, content tagging |

| Scikit-learn | Community-driven | Traditional Machine Learning, Analytics, Preprocessing | Comprehensive ML algorithms, consistent API, data utilities | Python | Mature, Very Active, Data Science cornerstone | Fraud detection, churn prediction, demand forecasting, credit scoring |

Sources & References

- Abadi, M., et al. (2016). TensorFlow: A System for Large-Scale Machine Learning. *OSDI ’16: 12th USENIX Symposium on Operating Systems Design and Implementation*. Retrieved from https://www.usenix.org/conference/osdi16/technical-sessions/presentation/abadi

- Paszke, A., et al. (2019). PyTorch: An Imperative Style, High-Performance Deep Learning Library. *Advances in Neural Information Processing Systems 32*. Retrieved from https://papers.nips.cc/paper/2019/file/bdbca288fee7f92f2b2af30c80d468aa-Paper.pdf

- Géron, A. (2019). *Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems*. O’Reilly Media.

- Pedregosa, F., et al. (2011). Scikit-learn: Machine Learning in Python. *Journal of Machine Learning Research, 12*, 2825-2830. Retrieved from https://www.jmlr.org/papers/volume12/pedregosa11a/pedregosa11a.pdf

About the Author

Lena Petrova, Principal AI Ethicist & Futures Strategist — I’m a passionate advocate for responsible innovation, guiding organizations to leverage AI ethically for sustainable growth and a human-centric future of work.

Reviewed by Kai Miller, Lead Content Strategist, AI & Innovation — Last reviewed: March 27, 2026

For a broader understanding of the theoretical underpinnings and societal implications that these practical tools bring to life, explore our comprehensive guide on Artificial Intelligence (AI) & Machine Learning.