Artificial Intelligence Trends 2026: What Every Enterprise Must Know

- Autonomous AI agents will replace single-task bots with goal-oriented multi-agent systems by 2026.

- Multimodal AI (text + image + audio + video) will become the standard interface paradigm.

- Edge AI chips (NVIDIA Jetson, Apple M-series, Qualcomm NPU) will embed intelligence into billions of devices.

- The EU AI Act and NIST AI RMF set the compliance baseline for enterprise AI deployment.

- Synthetic data will solve training data scarcity and bias in regulated industries.

The Rise of Autonomous AI Agents and Orchestration

By 2026, the concept of AI as a mere tool will begin to give way to AI as a proactive, goal-oriented agent. These aren’t just sophisticated chatbots; autonomous AI agents are designed to understand high-level objectives, break them down into actionable steps, interact with various systems and even other agents, and execute tasks with minimal human intervention. Imagine an AI agent not just answering your travel queries, but proactively booking flights, arranging accommodation, managing your itinerary, and even adjusting plans based on real-time events like flight delays, all while adhering to your preferences and budget. This shift represents a move from reactive AI to truly proactive, decision-making intelligence.

- Autonomous AI agents will replace single-task bots with goal-oriented multi-agent systems by 2026.

- Multimodal AI (text + image + audio + video) is becoming the standard interface paradigm.

- Edge AI chips (NVIDIA Jetson, Apple M-series, Qualcomm NPU) embed intelligence into billions of devices.

- The EU AI Act and NIST AI RMF set the compliance baseline for enterprise AI deployment.

- Synthetic data solves training data scarcity and bias in regulated industries.

Multi-Agent Systems and Collaborative Intelligence

The true power of autonomous agents will be amplified through multi-agent systems, where several specialized AIs collaborate to achieve complex objectives. For instance, in a corporate setting, one agent might be responsible for market analysis, another for supply chain optimization, and a third for customer engagement, all coordinating to launch a new product. In customer service, platforms like Zendesk and ServiceNow are integrating multi-agent AI to handle complex inquiries, from triaging initial requests to proactively resolving issues, significantly improving response times and customer satisfaction. This orchestration layer, often powered by advanced large language models (LLMs) like GPT-4o, Llama 3 (Meta), Claude 3.5 (Anthropic), and Gemini 1.5 (Google) acting as reasoning and coordination engines, requires sophisticated prompt engineering to achieve optimal results. For more on this, consult our ChatGPT guide. Companies like Google DeepMind are already exploring foundational models capable of planning and interacting with digital environments, laying the groundwork for agents that can navigate and manipulate complex software interfaces autonomously. The infrastructure to deploy these sophisticated agents is rapidly maturing, with platforms like Microsoft Azure AI and AWS SageMaker offering robust tools for development and deployment. We’ll see these systems move from research labs into practical applications across logistics, finance, and personalized digital assistance, significantly boosting productivity and operational efficiency. To understand the broader impact of AI in the workplace, explore AI-driven workplace 2026. The ability of these agents to learn from their interactions and adapt their strategies will make them increasingly indispensable.

Proactive Problem-Solving and Decision-Making

These agents won’t just follow instructions; they’ll anticipate needs and resolve unforeseen issues. Consider smart manufacturing facilities, where companies like Siemens are leveraging AI agents to monitor production lines, predict equipment failures before they occur, and even automatically re-route production to alternative machines or order replacement parts, minimizing downtime. In healthcare, organizations like the Mayo Clinic are exploring how autonomous agents could manage patient schedules, triage incoming requests, and even assist in diagnosis by cross-referencing patient data with vast medical knowledge bases, flagging critical conditions for human physicians. The implications for industries grappling with complexity and dynamic environments are profound, promising not just efficiency gains but also a new era of resilience and adaptability.

Among the key vendors and models driving these trends: OpenAI (GPT-4o, multimodal flagship), Anthropic (Claude 3.5), Meta (Llama 3, open-weight), and Google (Gemini 1.5, with 1M token context window) are advancing foundation model capabilities at pace. On the infrastructure side, Microsoft Azure AI, AWS SageMaker, and NVIDIA (with Jetson edge computing modules and TensorRT inference optimization) provide the deployment layer. For edge deployments, hardware like the Apple M-series chips, Qualcomm AI NPU, and Google EdgeTPU are enabling INT8-quantized models to run locally with sub-10ms latency. Interoperability standards like ONNX and TensorRT ensure models trained in PyTorch or TensorFlow can be efficiently deployed across these diverse hardware platforms.

Multimodal AI: Bridging the Sensory Gap

While current generative AI models primarily excel in processing and generating text or images, 2026 will see a significant leap forward in multimodal AI. This refers to AI systems capable of understanding, interpreting, and generating information across multiple sensory modalities simultaneously – text, images, audio, video, and even haptic feedback. This fusion of senses is critical for AI to interact with the world and humans in a more natural, intuitive, and contextually aware manner, mirroring human perception.

Beyond Text and Image: Towards Holistic Understanding

The next generation of AI will move beyond isolated processing of individual data types. Imagine an AI assistant that not only understands your spoken words but also interprets your tone of voice, recognizes facial expressions from video input, and even perceives the context of your physical environment through camera feeds. This holistic understanding allows for far more nuanced and empathetic interactions. For example, a customer service AI might detect frustration in a caller’s voice and combine it with keywords in their query to escalate the issue or offer a more sympathetic response. Tools like OpenAI’s Sora, which generates realistic and imaginative videos from text, are early indicators of this trend, but by 2026, these capabilities will extend to real-time understanding and generation across live streams of data.

Enhanced Human-Computer Interaction and Creative Expression

Multimodal AI will revolutionize how we interact with technology. Voice assistants will become truly conversational, understanding complex commands that blend visual and auditory cues (“Show me that blue shirt I saw in the ad yesterday, and play music similar to what was playing in the background”). In creative industries, artists will be able to describe their visions in words, sketches, and mood music, with AI generating sophisticated drafts that capture the essence of their multifaceted input. This enables a more fluid and less restrictive creative process. In augmented and virtual reality, multimodal AI will power immersive experiences where digital entities can react not just to your gaze or gestures, but also to your speech, emotional state, and even physiological responses, blurring the lines between the real and the simulated. Companies like Google, Meta, and various startups are heavily investing in this domain, understanding that natural, multimodal interaction is key to unlocking the full potential of future computing platforms.

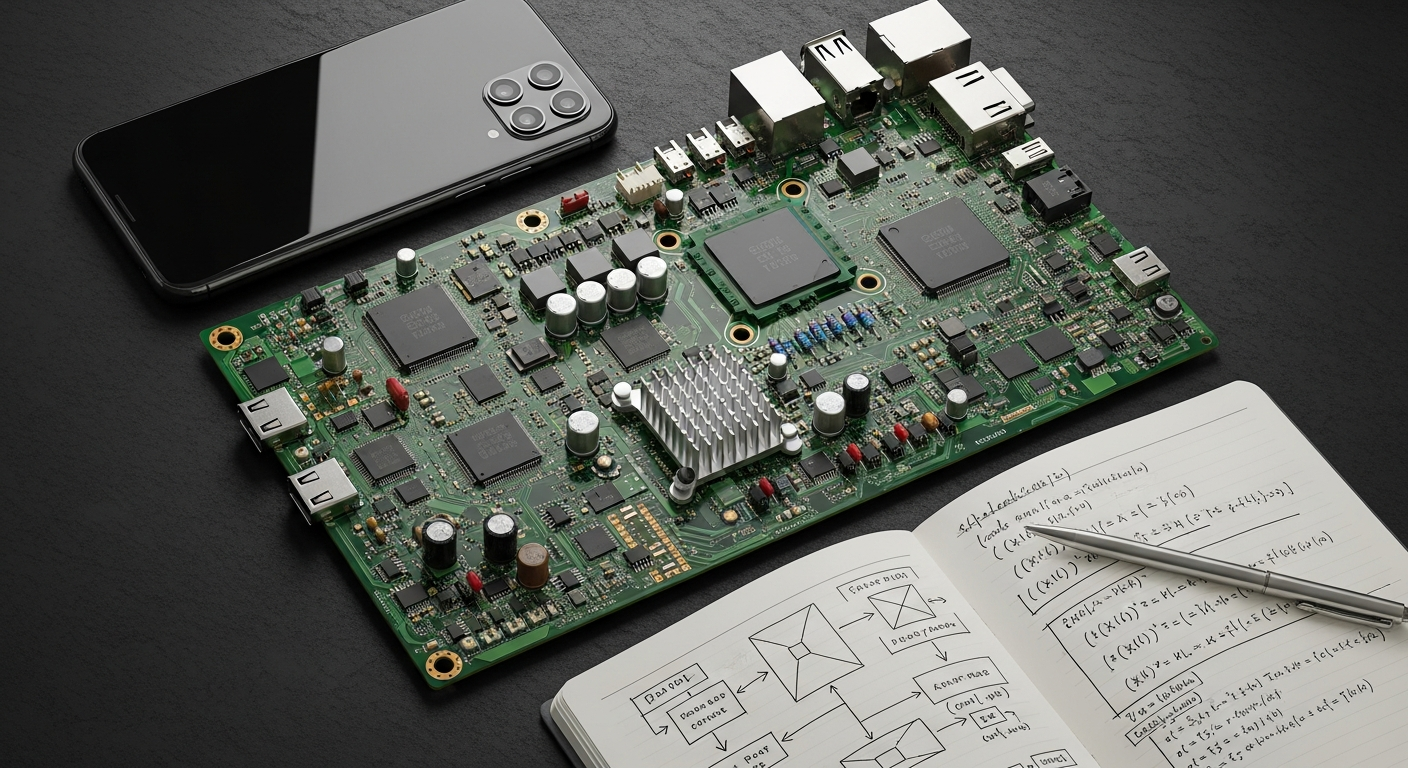

Edge AI: Intelligence Everywhere, Instantly

The traditional model of sending all data to centralized cloud servers for AI processing is becoming increasingly inefficient, slow, and expensive, especially with the explosion of connected devices. By 2026, Edge AI – the deployment of AI models directly onto devices at the “edge” of the network, closer to where data is generated – will be a dominant paradigm. This shift brings intelligence directly to sensors, cameras, robots, and even personal devices, enabling real-time decision-making without reliance on constant cloud connectivity.

Real-time Processing and Reduced Latency

The primary advantage of Edge AI is speed. In applications where milliseconds matter, such as autonomous vehicles, industrial automation, or critical infrastructure monitoring, processing data locally is essential. An autonomous car needs to identify obstacles and react instantaneously; waiting for cloud processing is not an option. Similarly, in smart factories, AI-powered cameras can detect manufacturing defects or safety hazards in real-time, triggering immediate corrective actions. This local processing, often optimized through techniques like INT8 quantization and model pruning and deployed using frameworks such as ONNX format and NVIDIA TensorRT, enables efficient on-device inference, significantly reducing latency and making AI systems more responsive and reliable in dynamic environments. The increasing availability of specialized AI chips like NVIDIA’s Jetson platform, Apple M-series chips, Qualcomm AI NPUs, and Google’s EdgeTPU designed for low-power, high-performance inference at the edge is fueling this trend, making powerful AI models feasible on smaller, more energy-efficient hardware.

Enhanced Privacy, Security, and Resilience

Processing data on-device inherently improves privacy and security by minimizing the transfer of sensitive information to the cloud. For applications involving personal health data, surveillance footage, or proprietary industrial processes, keeping data local is paramount for compliance and trust. Furthermore, Edge AI systems are often more resilient. They can continue to operate and make intelligent decisions even if network connectivity is intermittent or completely lost, which is crucial for remote operations, disaster response, or critical infrastructure. This decentralized intelligence network promises to embed AI into the very fabric of our physical world, making smart environments and intelligent objects truly ubiquitous. From smart city sensors that manage traffic flow to personal wearables that monitor health, Edge AI will power a vast array of interconnected, intelligent devices.

Hyper-Personalization and Adaptive Learning at Scale

The promise of personalized experiences has been a long-standing goal of digital technology. By 2026, AI will elevate this to an unprecedented level of hyper-personalization, capable of dynamically adapting to individual users, their contexts, and their evolving needs in real-time. This goes far beyond mere recommendations; it involves AI systems actively learning, anticipating, and tailoring interactions, content, and services uniquely for each person.

Dynamic Content and Experience Generation

Imagine an educational platform where AI doesn’t just suggest the lessons, but dynamically generates custom learning paths, explanations, and exercises based on a student’s real-time performance, learning style, and even emotional state. In retail, AI will move beyond suggesting products to dynamically creating personalized storefronts, product bundles, and even custom-designed items based on immediate preferences and historical data, all while considering factors like local inventory and delivery logistics. This level of personalization will transform user engagement, making every digital interaction feel uniquely crafted.

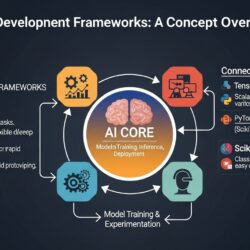

MLOps and AI Deployment Infrastructure

As AI models become more complex and critical to business operations, the need for robust MLOps (Machine Learning Operations) practices is paramount. MLOps in 2026 represents the convergence of DevOps, ML, and data engineering, ensuring the seamless development, deployment, monitoring, and maintenance of AI models in production. Enterprises recognize that simply building models isn’t enough; they must be scalable, reliable, and continuously optimized to deliver real-world value. A 2024 Gartner survey found that 85% of AI projects fail to reach production primarily due to inadequate MLOps practices, underscoring why deployment infrastructure is now mission-critical.

Key tools facilitating effective MLOps include:

- Experiment Tracking: MLflow for managing the machine learning lifecycle, tracking experiments, and packaging models.

- Pipeline Orchestration: Kubeflow for deploying and managing ML workflows on Kubernetes, and TFX (TensorFlow Extended) for building production ML pipelines.

- Model Serving: Seldon Core for deploying ML models on Kubernetes, providing scalable and secure inference.

Crucial for maintaining model performance and compliance are robust monitoring and observability solutions:

- Data Drift and Model Performance: Evidently AI and WhyLabs for monitoring data quality, model drift, and performance anomalies.

- Infrastructure Metrics: Prometheus for collecting and querying real-time metrics from ML infrastructure.

Data Governance and Privacy-Preserving AI

With the increasing volume and sensitivity of data used by AI, robust data governance and privacy-preserving AI techniques are no longer optional but essential. These approaches ensure that AI systems can deliver insights and value without compromising individual privacy or violating regulatory compliance.

Key privacy-preserving techniques gaining traction include:

- Federated Learning: Allows AI models to be trained on decentralized datasets located at the edge (e.g., mobile devices, hospitals) without centralizing the raw data, thereby protecting data privacy.

- Differential Privacy: Involves adding carefully calibrated mathematical noise to datasets or query results to obscure individual data points, making it impossible to identify specific individuals while still allowing for aggregate analysis.

- Secure Multi-Party Computation (SMC): Enables multiple parties to jointly compute a function over their private inputs without revealing those inputs to each other.

Enterprises are increasingly adopting specialized data governance tools like Immuta and Privacera to automate policy enforcement, manage access controls, and ensure compliance across diverse data estates. Furthermore, adherence to evolving standards like the ISO/IEC JTC 1/SC 42 AI standards framework and compliance with regulations from bodies such as the FTC and sector-specific authorities (e.g., FDA for medical AI diagnostics) will be critical for building trustworthy AI systems.

Practical explainability tools like LIME (Local Interpretable Model-agnostic Explanations) are becoming indispensable. For instance, LIME can explain why an AI model approved or denied a credit application by highlighting which specific input features (e.g., income, credit score, debt-to-income ratio) were most influential for that individual decision. Similarly, SHAP (SHapley Additive exPlanations) offers a robust method for attributing the contribution of each feature to a model’s prediction, providing a global understanding of feature importance across the dataset. According to McKinsey, companies with mature AI governance frameworks report 25% fewer AI-related incidents and 30% faster time-to-production for new AI models.

Key AI Vendors and Model Ecosystem (2026 Reference)

Beyond the major players, the AI ecosystem in 2026 includes a rich set of open and commercial models:

- Hugging Face — The central hub for open-source AI models, datasets, and demos. With over 500,000 models hosted, it democratizes access to foundation models for enterprise teams.

- Mistral AI — European AI startup offering high-performance open-weight models (Mistral 7B, Mixtral MoE) that run efficiently on-premise for organizations requiring data privacy.

- Perplexity AI — AI-powered search engine demonstrating how LLMs integrate with real-time web retrieval, a key pattern for enterprise knowledge management.

- Scale AI / Labelbox — Data annotation platforms critical for building high-quality training datasets; increasingly using AI-assisted labeling to reduce human effort.

- Intel Habana / Arm Neoverse — Alternative inference accelerators alongside NVIDIA’s ecosystem, offering cost-competitive edge and server-side AI compute options.

Performance benchmarking via MLPerf and Hugging Face Model Cards provides standardized comparisons across these models, helping enterprises make evidence-based procurement decisions.

Frequently Asked Questions (FAQs)

What are the top artificial intelligence trends to watch in 2026?

The top AI trends in 2026 include autonomous AI agents and multi-agent orchestration, multimodal AI systems that process text/image/audio simultaneously, Edge AI deployment on local devices (powered by NVIDIA Jetson, Apple M-series, and Qualcomm NPUs), hyper-personalization at scale, synthetic data generation for model training, and maturing ethical frameworks including the EU AI Act and NIST AI RMF.

How will autonomous AI agents change enterprise workflows by 2026?

By 2026, autonomous AI agents will transform enterprise workflows by orchestrating entire end-to-end processes without human intervention at each step. Unlike simple automation, agents can plan, decide, and adapt in real time — for example, automatically rerouting supply chains when disruptions are detected, or triaging customer service tickets and escalating complex cases. Vendors including Microsoft Azure AI, Google DeepMind, and AWS are building the infrastructure to deploy these agents at enterprise scale.

What regulatory frameworks govern AI deployment by 2026?

By 2026, the EU AI Act is fully operative, classifying AI systems by risk tier (unacceptable, high, limited, minimal) and requiring conformity assessments for high-risk applications. The NIST AI RMF provides voluntary guidance for U.S. organizations, while the OECD AI Principles establish international norms on transparency and accountability. Companies operating globally must align AI products with whichever frameworks apply in their markets.

Here are some common questions about artificial intelligence trends for 2026:

Q: What are the top artificial intelligence trends to watch in 2026?

A: The top AI trends in 2026 include autonomous AI agents and multi-agent orchestration, multimodal AI systems that process text/image/audio simultaneously, Edge AI deployment on local devices, hyper-personalization at scale, synthetic data generation for model training, and maturing ethical/regulatory frameworks including the EU AI Act and NIST AI RMF.

Q: How will autonomous AI agents change enterprise workflows by 2026?

A: By 2026, autonomous AI agents will transform enterprise workflows by orchestrating entire end-to-end processes without human intervention at each step. Unlike simple automation, agents can plan, decide, and adapt in real time — for example, automatically rerouting supply chains when disruptions are detected, or triaging customer service tickets and escalating complex cases. Platforms from vendors like Microsoft Azure AI, Google DeepMind, and AWS are building the infrastructure to deploy these agents at scale.

Q: What regulatory frameworks govern AI deployment by 2026?

A: By 2026, the EU AI Act is fully operative, classifying AI systems by risk tier (unacceptable, high, limited, minimal) and requiring conformity assessments for high-risk applications (healthcare, HR, critical infrastructure). The NIST AI RMF provides voluntary guidance for U.S. organizations, while the OECD AI Principles set international norms on transparency and accountability. Companies operating globally must align AI products with whichever frameworks apply in their markets.